Generative Suspicion: What Defense Lawyers Must Know About AI-Generated Police Reports

by Richard Resch

Police departments nationwide are racing to adopt artificial intelligence that transcribes body-camera footage, translates witness statements, and drafts investigative narratives. But these tools introduce profound risks to factual accuracy and due process that defense attorneys must challenge at every stage of a case.

A recent article published November 11, 2025, in Forensic Focus examines the emerging dangers of generative AI in law enforcement and the policy guardrails courts and agencies are beginning to require. For defense counsel and defendants, the message is unequivocal: AI-touched evidence represents a new frontier of vulnerability, demanding rigorous disclosure, independent verification, and thorough scrutiny.

The Core Danger: Confident but Entirely False Narratives

The fundamental problem with generative AI is its capacity for what the National Institute of Standards and Technology (“NIST”) calls “confabulation,” i.e., the production of fluent, authoritative statements that are entirely fabricated. These systems predict plausible language patterns, not truth. An AI-generated police report might confidently include invented dates, names, or actions that never occurred.

Any narrative touched by AI must be treated as presumptively unreliable until every factual assertion can be traced to its original source and independently verified. The polished police report may no longer represent a human investigator’s direct observations but rather a machine’s probabilistic reconstruction, one that could be fundamentally distorted.

In a groundbreaking 2025 law review article, Andrew Guthrie Ferguson, a professor at George Washington University Law School and a leading expert on police surveillance technologies, coined the term “generative suspicion” to describe how AI-assisted police reports threaten the integrity of criminal investigations. Writing in the Northwestern University Law Review, Ferguson warned that these systems risk replacing verified evidence with algorithmic logic in the probable cause narratives that form the bedrock of the criminal justice system.

Ferguson’s article, the first law review article to comprehensively address AI-assisted police reports, highlights a troubling reality. Police reports often serve as “the only official memorialization of what happened during an incident,” shaping not only probable cause determinations but also pretrial detention decisions, motions to suppress, plea bargains, and trial strategy. When machine-generated language infiltrates these foundational documents, the implications cascade through every stage of prosecution. Ferguson identifies core concerns about how the models were trained, their propensity for error and hallucination, bias in transcription, and the uncertainty surrounding how generative prompts ultimately shape the final report that prosecutors and judges rely upon.

How AI Report-Writing

Actually Works

To understand the vulnerability points defense counsel must challenge, it is essential to grasp how these systems function. Ferguson examines Axon’s Draft One, currently the market leader, revealing how body-camera audio becomes a police report.

The process begins when an officer’s body camera captures audio. After the encounter ends, the audio converts to text within minutes. The officer logs into Axon’s Evidence Dashboard and inputs basic parameters: crime type (from 12 preset categories), severity (felony, misdemeanor, infraction, or no charge), and whether an arrest was made.

Draft One then generates a pre-populated police report narrative that reads like a traditional report, not a transcript. The system includes bracketed “[Inserts]” requiring officers to manually add specific details the AI cannot generate such as names, legal justifications, and other particulars.

Critically, Axon’s guide instructs officers to “narrate out loud” during encounters and “echo back” what suspects say so the transcript captures complete information. This reveals a fundamental shift. Officers are being trained to perform policing in ways that optimize AI transcription. The technology is reshaping police behavior itself.

The Hallucination Problem:

When AI Fabricates Facts

One of the most alarming issues Ferguson identifies is AI hallucination – when the system generates plausible-sounding but entirely false information. Large language models are designed to predict the next most probable word in a sequence based on patterns in their training data. Sometimes these predictions are simply wrong, and the system confidently presents fabricated details.

As Ferguson explains, when you “peel open a large language model you won’t see ready-made information waiting to be retrieved. Instead, you’ll find billions and billions of numbers” used to calculate responses from scratch. The model is “more like an infinite Magic 8 Ball than an encyclopedia.”

The King County Prosecuting Attorney’s Office in Seattle documented a concrete example of such a hallucination in a September 2024 memo. Chief Deputy Prosecutor Daniel J. Clark cited a report where an AI tool, Draft One, “hallucinated” that an officer was present at a crime scene when, in fact, they were not. Following this discovery, the prosecutor’s office warned law enforcement: “For now, our office has made the decision not to accept any police narratives that were produced with the assistance of AI.” Clark noted that the “consequences will be devastating” if an officer testified to such an error, representing a powerful precedent on the technology’s assumed reliability.

Ferguson also reveals a disturbing reality. Some police departments cannot identify which reports were AI-generated because officers have been cutting and pasting AI drafts into official reports without disclosure. While Axon’s system requires officers to sign an acknowledgment that AI generated the report, that disclosure is not required to appear in the final published report used in criminal proceedings. In Lafayette, Indiana, police officials admitted they had “no way to search for these on our end” and that “it would be impossible to find them” without specific tracking protocols that many departments have not implemented.

There are critical weaknesses in how these systems are trained. Large language models learn from vast internet datasets, then refine through specialized training on police-report-specific materials. But the choice of training data fundamentally shapes what the AI can and cannot do well.

A system trained primarily on urban shoplifting reports might not adequately handle assault cases requiring medical documentation. One trained on standard traffic stops might fail with novel scenarios. Ferguson cites a real case where a tourist at Yellowstone National Park was arrested for physically handling a bison calf, an action that violated federal wildlife protection laws and ultimately led to the animal’s death. An AI system trained on typical urban or suburban policing data would have no exposure to wildlife interference crimes, potentially misclassifying the offense or omitting critical legal elements specific to federal park violations. Such gaps matter because criminal activity varies dramatically by jurisdiction and environment.

Additionally, the prompts programmed into these systems make hidden choices about word selection and tone. Systems replace certain words with “more objective” alternatives, meaning the actual language used during the encounter may never appear in the official record. These choices, Ferguson notes, are “hidden in the code, and need to be surfaced.”

Bias, Translation, and the Language Barrier

Beyond fabrication, these systems possess measurable bias. Large language models trained primarily on American English frequently misinterpret non-standard dialects, regional slang, or non-English speech. The Department of Justice’s (“DOJ”) December 3, 2024, report, “Artificial Intelligence and Criminal Justice: Final Report,” directly ties this performance gap to due-process violations, noting that a tool calibrated for American English may dangerously misrender Mexican Spanish or Haitian Creole.

Research documents “dialect prejudice” affecting AI predictions about speakers, with significant accuracy disparities for African American Vernacular English. Researchers attribute this to “insufficient audio data from Black speakers when training the models.”

A subtle mistranslation becomes sealed into the official record, potentially distorting a defendant’s words in ways nearly impossible to detect later. This leads to critical defense inquiries. Who verified the AI’s interpretation? Was a fluent human reviewer involved? Can the output meet evidentiary standards when the translation process itself is opaque?

These tools create serious security risks. When investigators copy personally identifiable information or sealed material into inadequately secured AI platforms, that data could be permanently exposed.

The systems are also vulnerable to “prompt-injection” attacks, a technique where malicious actors embed hidden instructions within seemingly innocent text that cause the AI to behave in unintended ways. For example, a suspect’s statement could contain carefully crafted language that instructs the AI to ignore certain facts, fabricate incriminating evidence, or leak sensitive case information. In one documented scenario, researchers showed how injected prompts could cause an AI system to disregard its safety guidelines and generate false content. For police reports, this means a sophisticated actor could potentially manipulate the AI into producing a narrative that omits exculpatory statements, mischaracterizes evidence, or even exposes confidential informant information, all without the reviewing officer realizing the report has been compromised.

Research from the Electronic Frontier Foundation reveals that Draft One does not preserve original drafts or complete prompt histories. When underlying prompts, logs, and intermediate outputs are never saved, the defense loses any ability to test provenance or authenticity, which is a chain-of-custody failure, not a procedural technicality. More critically, without these records, detecting whether a prompt injection attack occurred becomes virtually impossible.

Policy Consensus

Legal and policy frameworks are starting to emerge. For instance, King County, Washington’s prosecutor’s office has told law enforcement agencies it will no longer accept AI-assisted narratives after discovering errors.

The California Judicial Council adopted Rule 10.430 of the California Rules of Court, effective September 1, 2025. Under the rule, any California court that does not prohibit generative AI use by court staff or judicial officers must adopt a use policy by December 15, 2025. The policy must cover staff use for any purpose and judicial officer use for tasks outside their adjudicative role.

Required policy elements include: (1) prohibiting entry of confidential or non-public data into public AI systems, (2) prohibiting use that unlawfully discriminates, (3) requiring staff and judicial officers who create or use AI material to verify accuracy and correct errors, (4) requiring removal of biased or harmful content, (5) requiring disclosure when public-facing work consists entirely of AI outputs, and (6) mandating compliance with all applicable laws and professional conduct codes. Courts can either ban AI entirely or adopt compliant policies.

The Conference of State Court Administrators (“COSCA”), with support and web hosting by the National Center for State Courts (“NCSC”), has issued a policy paper titled “Generative AI and the Future of the Courts: Responsibilities and Possibilities.” Released in August 2024, the paper urges judicial leaders to establish clear standards for transparency, data privacy, human oversight, and governance frameworks whenever generative AI systems are used in court operations. Although not binding law, the COSCA guidance represents the leading best-practice framework emerging among court administrators nationwide for managing AI’s ethical and operational risks within the judiciary.

The DOJ’s 2024 report emphasizes that fairness, civil-rights protection, and due-process require pre-deployment testing, bias audits, human oversight, and documented governance of AI systems when they are utilized in criminal investigations, evidence analysis, or other criminal-justice functions. Practical experience shows that if prompts, intermediate outputs, audit logs, and user-IDs are not preserved, meaningful review and challenge of AI-derived work can become impossible, thereby undermining accountability, transparency, and the integrity of evidence.

The Policing Project at NYU Law has released its “Model Statute: Police Use of AI – Inventory and Disclosure,” which instructs law enforcement agencies to conduct public inventories of any “Covered AI” systems used to aid criminal investigations (including generative AI used to draft or materially assist police reports), to develop and publish agency policies governing those systems, to retain the first draft of any generative-AI-assisted report along with audit trails, and to explicitly disclose to both the prosecuting authority and the individual under investigation when Covered AI was used in a case. The model statute also requires that the name of each system, vendor, inputs, outputs, and authorized uses be publicly accessible, closing the transparency gap around police-AI deployment. This legislative model aligns squarely with the broader trend that disclosure, testing, verification, human-in-the-loop oversight, and auditability are becoming standard expectations for the use of AI in investigations and criminal justice workflows.

Across judicial rules, national guidance, DOJ policy, and model legislation, a clear framework is emerging. Agencies using generative AI must adopt enforceable policies or bans, protect confidentiality, mitigate bias, verify accuracy, preserve audit trails, and disclose when AI contributes to work product. For defense attorneys and defendants, the key takeaway is that AI in policing is no longer hypothetical; it is governed, regulated, and subject to scrutiny as a necessary part of discovery and defense strategy.

Practical Guidance for

Defense Counsel

For defense attorneys, the emerging standards provide a clear litigation roadmap. Ferguson’s research makes plain that discovery requests must go far beyond the final report.

Core System Information:

The specific model name, version, and vendor of any AI tool used (e.g., OpenAI’s GPT-4 Turbo for Draft One)

Training data specifications – what datasets were used, what incident types they covered, and what jurisdictions, languages, or dialects were included or excluded

Full system configurations, including “creativity” settings, objectivity or bias-mitigation parameters, content filters, and internal guardrails

Prompt and Processing Records:

Complete prompt histories showing exactly what questions were posed to the system and what parameters the officer input

All intermediate outputs and drafts, including rejected or edited versions

Records of automatic word substitutions or algorithmic replacements showing which words the system altered and how those changes affected meaning

Context markers such as crime-type classifications, severity categories, and arrest-status selections made before report generation

Audit Trails and Verification:

Access logs with timestamps, user IDs, IP addresses, and complete edit histories

Documentation showing whether officers met required edit or verification standards

Clear identification of which portions were AI-generated versus human-authored

Internal feedback surveys or performance logs officers completed about AI accuracy

Red-team assessments, penetration tests, and vendor or agency error-rate measurements

Vendor Contracts and

Procurement Files:

Procurement files and vendor contracts revealing accuracy claims, bias-mitigation promises, and audit provisions

The agency’s internal AI usage policy – when it may be used, when prohibited, which cases are excluded, and what human oversight applies

Documentation of pre-deployment testing, bias audits, and error-rate studies

Additional Strategic

Considerations:

For cases involving translation: verify that fluent human reviewers validated AI outputs and that original transcripts are preserved

For systems using public models: confirm whether personally identifiable information was entered, raising confidentiality concerns

Request the agency’s retention policies for prompts, outputs, and logs – how long records are maintained and when they’re purged

When AI contributes to charging decisions or narratives: consider requesting reliability hearings to evaluate whether AI content meets evidentiary standards

The Office of Management and Budget’s Memorandum M-24-18, “Advancing the Responsible Acquisition of Artificial Intelligence in Government,” directs federal agencies to treat AI acquisitions as a distinct category of IT procurement and to incorporate contractual provisions covering logging, cybersecurity, data-ownership, vendor audit cooperation, performance monitoring, incident-reporting, and transparency of training and testing. If a vendor contract mandated testing, validation or monitoring and the agency never executed it (or failed to secure required documentation of vendor safeguards), the foundation for AI-generated evidence is fundamentally weakened. In that event, the agency’s own failure to follow its procurement protocols creates a potent basis for challenging the reliability of any resulting AI-assisted report or analysis.

By pursuing these discovery items, defense counsel can ensure that AI use in policing meets the same transparency and accountability standards required of every other investigative tool. Treat AI-generated work as a potential vulnerability, not a neutral efficiency aid. Scrutinize the chain of custody for prompts and outputs. If key artifacts are missing, seek adverse inference, suppression, or limiting instructions.

The Forensic Focus article urges law enforcement agencies to classify AI uses by risk level, treating anything factual that could enter evidence as high risk. It calls for clear disclosures on reports, preserved “redline” changes showing human edits, and treatment of prompts and outputs as evidence-adjacent artifacts requiring timestamps and user identification. When agencies ignore these recommendations, defense counsel has legitimate grounds to argue that the evidence was produced through inadequate processes.

Challenging Admissibility:

The Black Box Problem

Generative-AI outputs present a classic reliability challenge under the various standards for the admissibility of expert testimony. Because these systems rely on probabilistic large-language-model architectures rather than deterministic logic, the same input prompt may generate different outputs on different runs. As Ferguson notes, when a prosecutor cannot explain how the model arrived at its conclusions, the lack of transparency, standard controls, and documented error-rates heighten the risk that such evidence fails the “reliability” factor required by expert testimony standards. When there is no meaningful replicability, no human-readable reasoning chain, and no quantified or documented error-rate specific to the context, the foundation of AI-generated work product becomes vulnerable to challenge.

Defense attorneys should argue that without the full record of prompts, testing methodology, and validation steps, AI-generated or AI-edited portions of evidence fail basic tests for scientific or technical reliability. Pre-trial hearings should probe whether the government can demonstrate that the model’s outputs were reliable, verified, and free from bias.

Axon’s own quality survey asks officers to flag problems including “False Statements,” “Incorrect Timelines,” “Subjective/Vague Word Choice,” “Irrelevant Details,” and “Not Enough Details.” The company’s recognition that these errors occur provides powerful ammunition for reliability challenges.

The Critical Role

of Cross-Examination

Cross-examination must focus on the “human in the loop” who supposedly verified the AI’s work. That person is the linchpin of reliability. Ferguson suggests specific lines of inquiry: What specific source material did the officer consult when reviewing the AI draft? How long did they spend comparing the AI summary to the actual body-camera recording? What discrepancies did they find, and where are their verification notes?

If the reviewer cannot credibly account for the verification process, both their credibility and the report’s integrity collapse. Did they make the minimum required edits or simply click “approve”? Can they identify which sections they changed and why? Were they aware of the system’s limitations in chaotic scenes or with multiple speakers?

Ferguson notes that Axon built in safeguards requiring officers to correct obviously nonsensical statements like “the international spy network was revealed to be a group of highly trained squirrels.” If an officer cannot explain what they verified, the entire review process is called into question. Civil liberties advocates have pointed out that while Draft One requires officers to “review, edit and sign off on accuracy,” the system’s auditability remains opaque, making meaningful verification difficult to prove.

Prosecutorial Ethics

and Spoliation

The American Bar Association Standing Committee on Ethics and Professional Responsibility issued Formal Opinion 512, “Generative Artificial Intelligence Tools,” on July 29, 2024. The guidance makes clear that a lawyer’s ethical duties – particularly under Model Rules 1.1 (competence), 1.6 (confidentiality), 3.3 (candor to the tribunal), and 5.1 and 5.3 (supervision) – apply equally when using generative AI.

For prosecutors, these duties extend to the investigative process. A prosecutor who fails to seek out and turn over the complete provenance of AI-generated evidence – including prompts, error logs, and validation steps – may be breaching ethical duties by concealing impeaching information about the prosecution’s evidence-gathering methods.

When AI artifacts are missing, defense counsel should pursue aggressive remedies based on spoliation principles. These “artifacts,” the digital traces revealing how an AI output was created, include the original prompts, intermediate drafts, model versions, system configurations, edit histories, error logs, audit trails, and any human review notes or redline versions. Like laboratory bench notes or raw forensic data, these materials permit replication, testing, and assessment of the prosecution’s claimed result.

If an agency cannot produce these artifacts, the evidentiary foundation collapses. Courts have long held that when potentially exculpatory evidence is lost through negligence or bad faith, sanctions may include exclusion, adverse-inference instructions, or even dismissal. The same logic applies here. When a department admits it cannot identify which reports were AI-generated or that it failed to preserve prompt logs and draft histories, a presumption of unreliability should attach.

Without these artifacts, neither the prosecution nor the court can verify what information the AI received, what it produced, or what edits a human reviewer made. This uncertainty strikes at core due process principles of accuracy, transparency, and the ability to confront and test evidence.

Defense attorneys should seek orders compelling preservation and production of all AI artifacts at the outset of litigation. Where agencies failed to maintain them, counsel should argue spoliation and seek remedies ranging from exclusion of the AI-derived material to adverse-inference instructions to suppression where the absence prevents meaningful reliability testing.

Fair and Just Prosecution, a national network of elected prosecutors and criminal justice advocates committed to promoting a justice system grounded in fairness and equity, has documented multiple cases where AI-drafted reports introduced errors favoring the prosecution. In its June 2025 issue brief “AI-Generated Police Reports: High-Tech, Low Accuracy, Big Risks,” the organization warned that these tools pose serious threats to accuracy and due process. The brief identified errors including factual misstatements, misattributions, and omissions that systematically disadvantaged defendants, from mischaracterizing the location of contraband in a vehicle to omitting exculpatory details from witness statements.

Conclusion

The efficiency promise of AI does not reduce the government’s burden to prove its case. The emerging consensus from NIST, the DOJ, state courts, prosecutors themselves, and Ferguson’s comprehensive legal analysis is clear: disclosure, testing, documentation, and human verification are mandatory, not optional.

As Ferguson warns, “the traditional standards of reasonable suspicion, probable cause, and proof beyond a reasonable doubt – historically grounded in detailed factual narratives drafted by police officers – are now being replaced by AI-generative suspicion.” This shift is not theoretical. It is happening now in departments across the country.

The criminal justice system’s legitimacy rests on the reliability of its evidence-based factual narratives. When those narratives become partly machine-authored – generated by systems that hallucinate, embed bias, and operate as black boxes – the defense bar’s role becomes even more critical. Defense attorneys must ensure that the constitutional duty to verify, question, and test remains paramount, not delegated to algorithms optimized for efficiency rather than truth and factual accuracy.

The efficiency of automation cannot displace the Constitution’s various safeguards. If the factual foundation of a criminal case rests on AI outputs that cannot be independently verified, tested for bias, or traced to their source, then that foundation is too flimsy to support a conviction. Defense counsel must make that argument in discovery, in motions, in cross-examination, and before juries until courts genuinely understand that AI-assisted evidence, like any other scientific or technical evidence, must meet established standards of reliability before it can be used to strip people of their liberty.

Sources: American Bar Association. “Generative AI and the Legal Profession: Ethical Considerations.” 2024; Chan, Samantha, et al. “Conversational AI Powered by Large Language Models Amplifies False Memories in Witness Interviews.” arXiv, August 2024; Duke Law Center for Science and Justice. “Admissibility of AI-Generated Evidence.” 2025; Electronic Frontier Foundation. “Axon’s Draft One Is Designed to Defy Transparency.” July 2025; Fair and Just Prosecution. “AI-Generated Police Reports: High-Tech, Low Accuracy, Big Risks.” June 2025; Ferguson, Andrew Guthrie. “Generative Suspicion and the Risks of AI-Assisted Police Reports.” Northwestern University Law Review, 2025; Forensic Focus. “Managing GenAI Risk in Police Investigations.” Nov. 11, 2025; National Center for State Courts. “AI and the Courts: Resources.” 2024; National Conference of State Legislatures. “Artificial Intelligence and Law Enforcement: The Federal and State Landscape.” September 2024; The Policing Project at NYU Law. “Police Use of AI – Model Statute.” Sept. 8, 2025; U.S. Department of Justice. “Artificial Intelligence and Criminal Justice: Final Report.” December 2024.

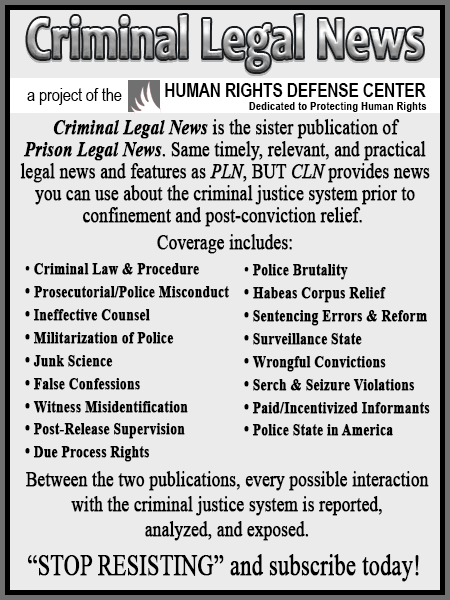

As a digital subscriber to Criminal Legal News, you can access full text and downloads for this and other premium content.

Already a subscriber? Login