Traditional Forensic Ballistics Comparisons Giving Way to Virtual 3D Methods

by Casey J. Bastian

Forensic experts have been conducting ballistics comparisons and presenting their testimony in courts for more than 100 years. Experts frequently imply that accurately identifying that a specific gun fired a particular bullet is straightforward: Simply search for distinctive marks on a bullet made by the barrel of a gun. If the marks are similar to the unique characteristics of the barrel, you have a match. Despite what is shown on TV, in real life it’s not so simple or accurate.

In 2009, the National Academy of Sciences (“NAS”) issued a report about forensic analysis and found that “sufficient studies have not been done to understand the reliability and reproducibility of the methods.” The President’s Council of Advisors on Science and Technology Report (“PCAST”) validated the NAS findings in 2016. PCAST noted that in the intervening seven years, “the current evidence still falls short of the scientific criteria for foundational validity.”

This was made clear in a 2020 opinion by the U.S. District Court for the District of Columbia. United States v. Harris, 502 F.Supp.3d 28 (D.D.C. 2020). The judge set limitations on what the firearms expert could say during testimony about the ballistic evidence. The expert was not allowed to use the term “match” or “state his expert opinion with any level of statistical certainty” and was not allowed to use the phrases “to the exclusion of all other firearms” or “to a reasonable degree of scientific certainty.”

The scientific community has responded to these types of issues with research supported by the National Institute of Justice (“NIJ”). The NIJ has supported efforts over the last decade to establish a “statistical basis for firearm toolmark comparisons and move from 2D to 3D comparisons.” This is being done in collaboration with the National Institute of Standards and Technology (“NIST”). NIST created its Ballistics Toolmark Research Database (“NBTRD”) in 2016. To create this research database, NIST mechanical engineer Xiaoyu Alan Zheng attended various law enforcement conferences and asked police departments and other agencies to test-fire their reference firearms collections and submit that data.

Law enforcement then sent Zheng bullets, cartridge cases, and information about the guns and ammunition used. Zheng then created a virtual model of the toolmarks using a high-resolution 3D microscope. The 3D images provide a high level of detail compared to 2D images, and the 3D images are not affected by lighting conditions. This allows for more objective comparisons of the evidence.

As the database grows, more information becomes available to help address the “foundational uncertainty” cited by the judge in Harris. “We made it a centralized research hub of images of firearm toolmarks on cartridge cases and bullets,” said Zheng. Prior to this, researchers were limited in the diversity and size of accessible samples. A key advantage of 3D imaging is that it allows for high-definition scans of the actual surface with high repeatability. With 3D images, the “data is a direct measurement and digital representation of the surface topography.” This makes 3D data more repeatable and not sensitive to lighting conditions, allowing for more exact comparisons by both algorithms and examiners.

The leading developer of high-definition 3D forensic firearms imaging is Cadre Research Labs, which was founded by Ryan Lilien. He notes that because time is an important factor in investigations, 3D scanning can “greatly simplify the process.” Normally, for comparisons, the examiner would have to go to the evidence archives, get the original specimen, and if it must be mailed to another jurisdiction, there can be chain of custody issues and the need for official approval. With 3D data, an examiner simply clicks on the file. This method allows for remote verification. This can help with tremendous backlogs.

Lilien said, “Because it doesn’t have to be done at the same site, a lab with a backlog of cases can go to another lab, maybe in a different part of the state, that has a little extra capacity.” Working with virtual data also allows for a second examiner to independently verify the initial conclusions. “You can truly make the verification process blind,” says Lilien.

Although 3D images are now routinely used for ranking samples in the database against the actual evidence, it is not replacing traditional methods in the courtroom yet. A main issue is the cost. Traditional 2D analysis systems cost between $50,000 and $80,000. The 3D systems can run upwards of $250,000, “and of course you are going to need to train your people to use it, and develop a plan for deployment, validation, and quality control,” said Zheng.

Establishing acceptable standards is another issue. The NBTRD is a research tool, and no data are going to be used in actual casework as of yet. NIST is working with the Federal Bureau of Investigation and the Netherlands Forensic Institute to “develop methods and reference data for statistical approaches to characterize the evidentiary strength or uncertainty of a comparison result.”

Zheng believes that if a large enough data set is collected, appropriate models can be developed to obtain the ultimate goal of being able to go into a courtroom with a high degree of certainty. Zheng says it must be a slow process to build an appropriately large reference population in the data sets. Zheng and Lilien agree that it could be three-to-five years before 3D virtual forensics are accepted by the courts. Both agree that the “guardrails for responsible use of the technology have to be put in place.” If this 3D technology limits false convictions, it will be worth the wait.

Source: nij.oip.gov

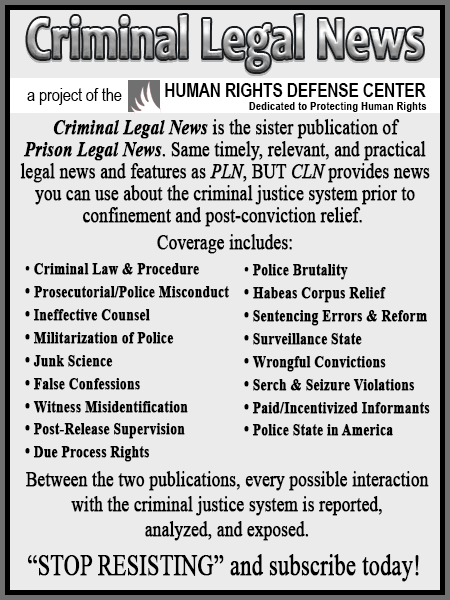

As a digital subscriber to Criminal Legal News, you can access full text and downloads for this and other premium content.

Already a subscriber? Login